The Creative Middleman

THE CREATIVE MIDDLEMAN

Section titled “THE CREATIVE MIDDLEMAN”Adobe’s AI Identity Crisis and What It Means for the $257 Stock

Section titled “Adobe’s AI Identity Crisis and What It Means for the $257 Stock”When Your Flagship AI Product Routes to Competitors’ Models to Produce Readable Text, the Market Notices

Section titled “When Your Flagship AI Product Routes to Competitors’ Models to Produce Readable Text, the Market Notices”Jeep Marshall LTC, US Army (Retired) Airborne Infantry | Special Operations | Process Improvement February 2026

Paper 4 in the “Herding Cats in the AI Age” Series

“Scaling AI agents is herding cats. The cats are brilliant, tireless, and fast — but nobody told them where the barn is, and half of them are chasing mice that don’t exist.” — Jeep Marshall

TEAM COMPOSITION AND METHODOLOGY

Section titled “TEAM COMPOSITION AND METHODOLOGY”This paper deploys a multi-disciplinary analytical team. Each expert brings a distinct lens to the same problem set, and their assessments converge into a unified SWOT framework and investment thesis.

Lean Six Sigma Black Belt (LSS-BB): Value stream analysis of Adobe’s AI delivery pipeline. Waste identification using DOWNTIME framework. Process capability assessment of Firefly’s native generation vs. partner model routing.

Quality Assurance Standards Analyst (QASA): Source verification for all stock data, analyst ratings, and technical claims. Cross-reference validation against Adobe’s own documentation and SEC filings.

Supply-Chain / Dependency Reviewer: Dependency risk assessment of Adobe’s partner model architecture. Supply chain vulnerability analysis. Platform lock-in vs. vendor diversification trade-offs. (This paper applies the Safety & Security lens of the ASS2 framework — Automation, Structure & Scalability, Safety & Security — in single-domain mode, since the analysis scope is supply-chain dependency risk rather than a full three-domain review.)

Creative Arts Practitioner: Hands-on comparative testing of Adobe Firefly native models vs. partner models using identical prompts. Visual evidence documentation.

Observer-Controller (OC): Scope management, team coordination, and quality gate enforcement.

EXECUTIVE SUMMARY

Section titled “EXECUTIVE SUMMARY”Adobe trades at $257.78 as of February 2026. The stock declined 43% over the past year.1 Analysts maintain a consensus Buy rating with a mean price target of $421, forecasting a 58% upside.2 This paper argues that the analyst consensus misses a structural weakness that hands-on testing exposes: Adobe’s flagship generative AI product, Firefly, delivers its best results by routing prompts to competitors’ models — making Adobe a creative middleman, not a creative engine.

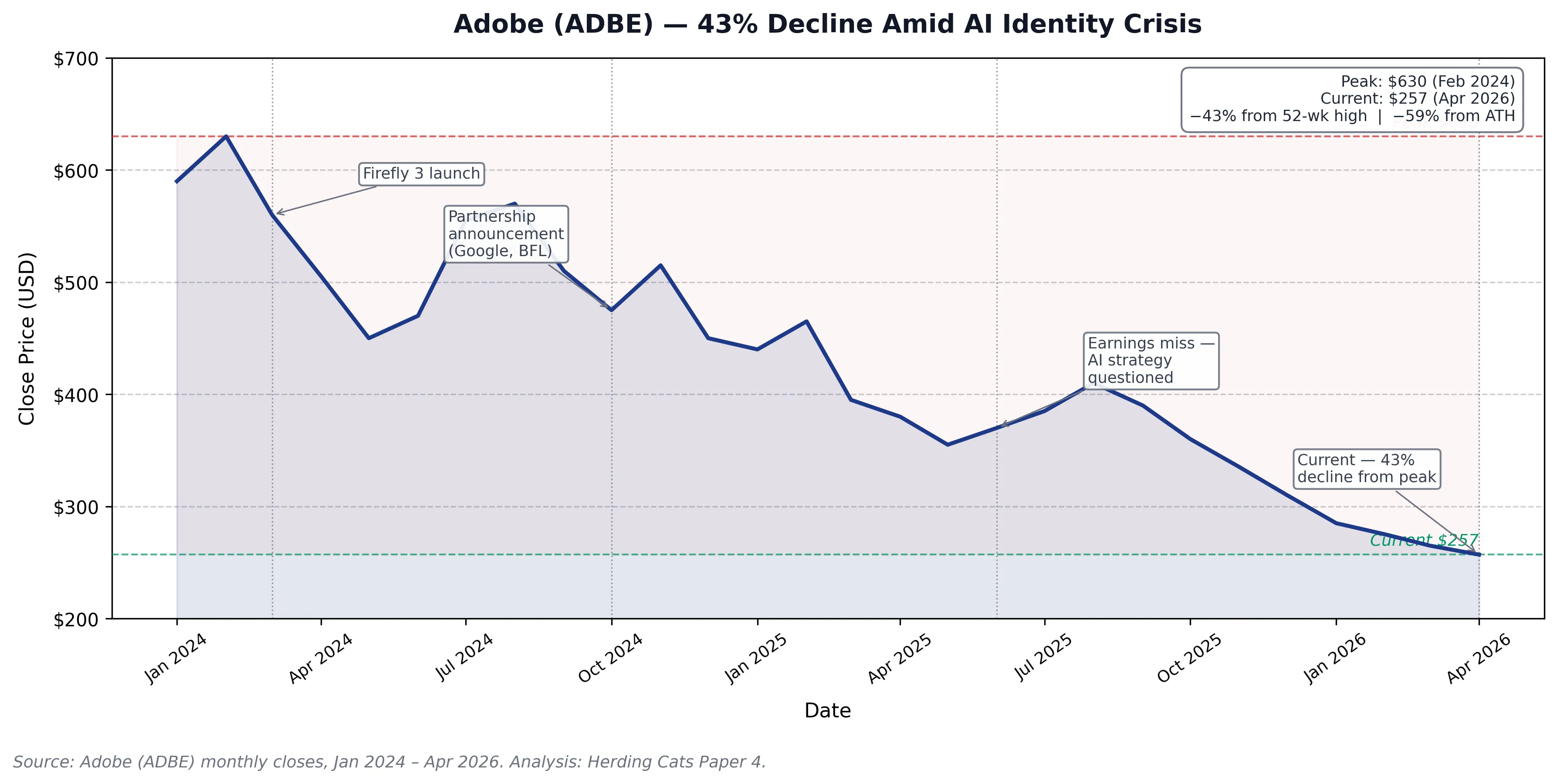

Figure 1: Adobe (ADBE) monthly close prices, January 2024 – April 2026. Annotated events: Firefly 3 launch (Mar 2024), partnership announcements with Google/Black Forest Labs (Oct 2024), earnings miss and AI strategy questioning (Jun 2025), current price (Apr 2026). Peak-to-current decline: −43% from 1-year peak, −59% from all-time high.

Figure 1: Adobe (ADBE) monthly close prices, January 2024 – April 2026. Annotated events: Firefly 3 launch (Mar 2024), partnership announcements with Google/Black Forest Labs (Oct 2024), earnings miss and AI strategy questioning (Jun 2025), current price (Apr 2026). Peak-to-current decline: −43% from 1-year peak, −59% from all-time high.

Adobe’s entire growth narrative rests on AI. Over 35% of Photoshop subscribers use generative AI features.3 More than $5 billion in annual recurring revenue comes from users engaging with AI capabilities.4 When those features depend on Google’s Gemini, Black Forest Labs’ FLUX, Ideogram’s text rendering, and Runway’s video generation, Adobe’s competitive moat narrows from “we build the best creative AI” to “we host the best creative AI that other companies build.”

The military calls this a single point of failure in the supply chain. Lean Six Sigma practitioners call it outsourcing your core value proposition. Investors call it revenue risk.

1. THE THESIS: ADOBE BECAME A PLATFORM, NOT A PRODUCT

Section titled “1. THE THESIS: ADOBE BECAME A PLATFORM, NOT A PRODUCT”Paper 1 established that AI systems possess extraordinary cognitive horsepower but lack the discipline, structure, and operational maturity to deploy that power at scale. Paper 2 demonstrated that the military already solved the coordination problem civilian AI companies keep rediscovering. Paper 3 proved that a single practitioner’s knowledge vault became an accidental laboratory for multi-agent coordination.

Paper 4 applies these frameworks to a specific company making a specific strategic bet: Adobe’s decision to transform Firefly from a proprietary AI engine into a multi-model marketplace.

At Adobe MAX in October 2025, CEO Shantanu Narayen announced an expanded strategic partnership with Google Cloud, integrating Gemini, Veo, and Imagen directly into Adobe’s applications.5 By February 2026, Firefly’s model dropdown includes partner models from Google, Black Forest Labs, Ideogram, Luma AI, OpenAI, Pika, Runway, Moonvalley, ElevenLabs, and Topaz Labs.6 Adobe positions this as “more choice, more control, more creativity.”7

The LSS-BB identifies this differently: Adobe recognized its native models cannot compete across all creative dimensions, so it pivoted from product company to platform company — mid-stride, mid-market, while the stock price tracked the transition in real time.

2. THE EVIDENCE: SAME PROMPT, DIFFERENT ENGINES, DEVASTATING RESULTS

Section titled “2. THE EVIDENCE: SAME PROMPT, DIFFERENT ENGINES, DEVASTATING RESULTS”The Creative Arts practitioner conducted a controlled head-to-head comparison using identical prompts submitted through Adobe Firefly’s interface. The only variable: the model selection dropdown, switching between Adobe’s native Firefly Image Model 5 and Google’s Gemini 2.5 Flash Image.

Test 1: Technical Wireframe Diagram

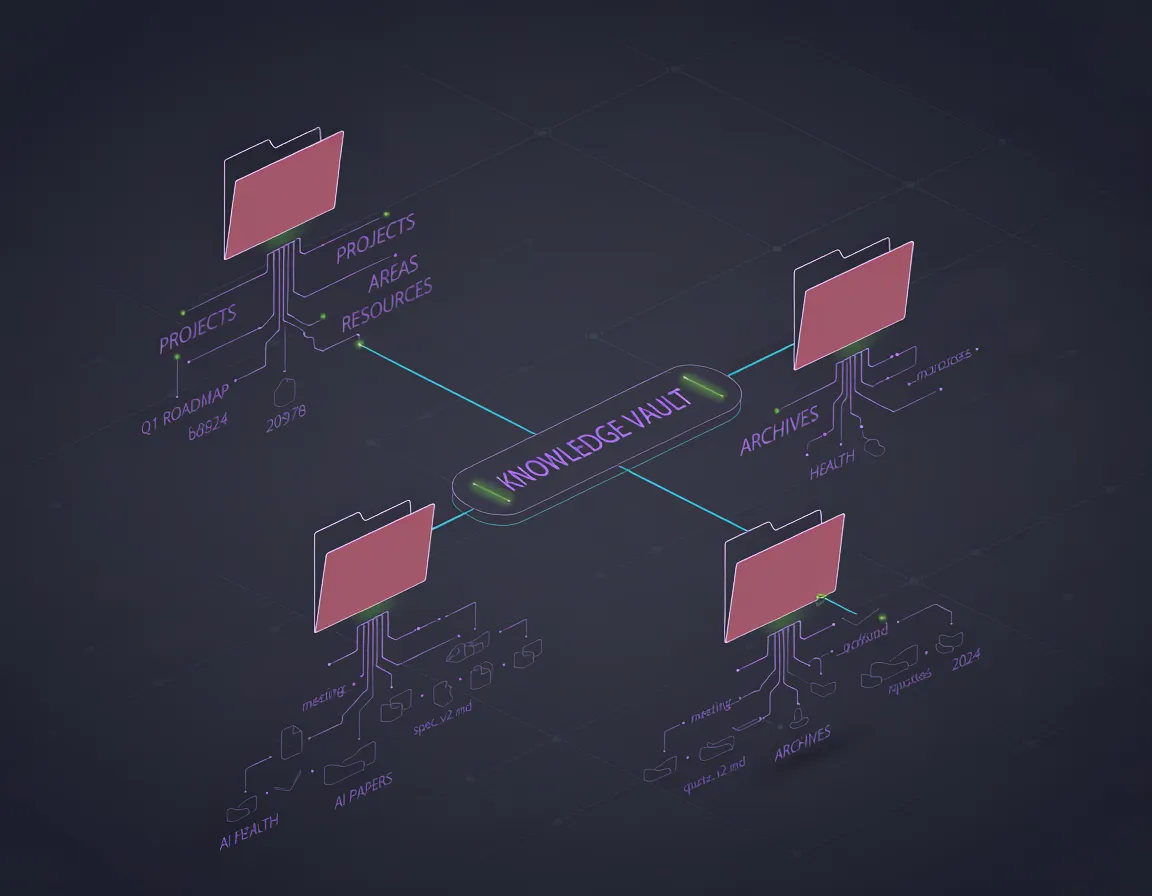

Section titled “Test 1: Technical Wireframe Diagram”Prompt: “A wireframe architecture diagram of a PARA method knowledge vault (Projects, Areas, Resources, Archives) in Dracula theme colors”

Firefly Image Model 5 Result: The native model produced a dark-themed visualization with folder icons and connecting lines. Top-level labels partially rendered (“PROJECTS,” “KNOWLEDGE VAULT,” “ARCHIVES”) mixed with gibberish sub-labels (“b8524,” “20978”) and duplicated category names. No functional hierarchy. No logical data flow. Concept art for a video game loading screen — beautiful but operationally worthless.

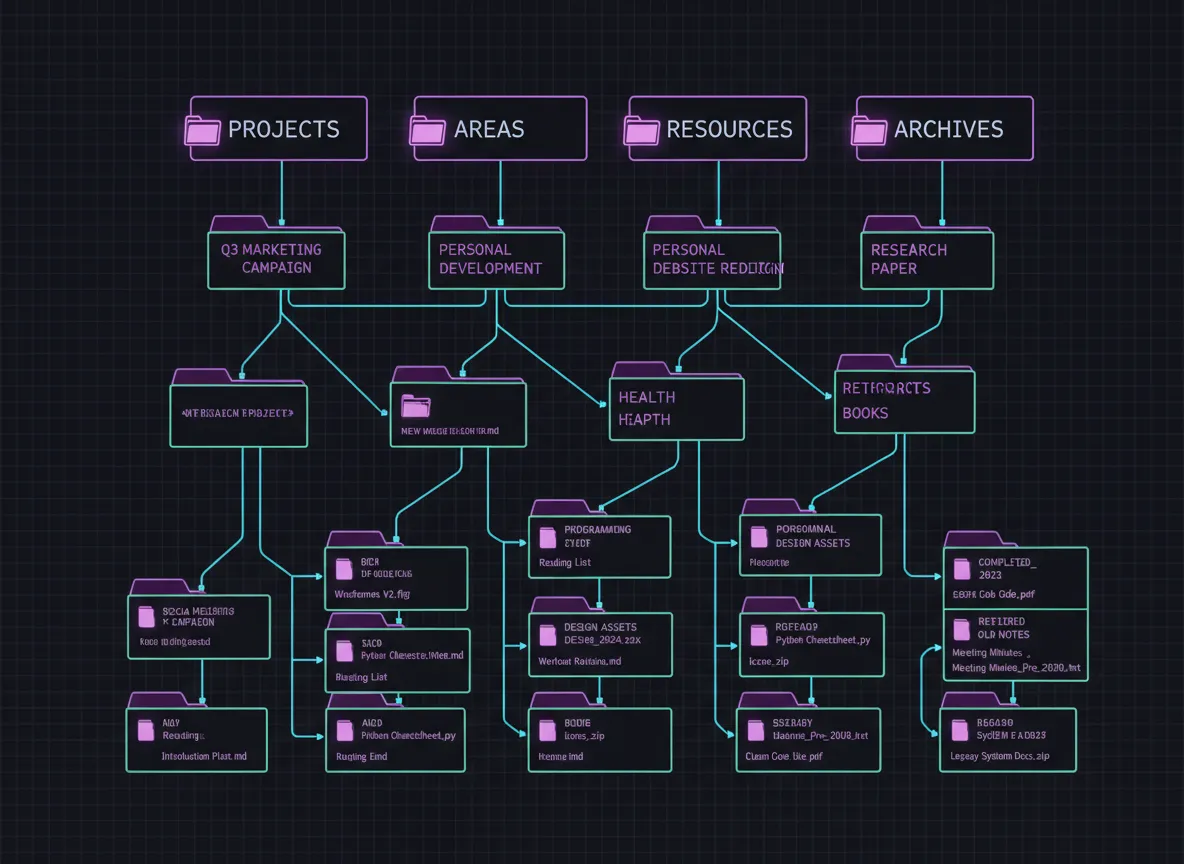

Gemini 2.5 Flash Image (via Firefly) Result: The partner model produced a structured, hierarchical diagram with all four PARA categories clearly labeled. Sub-items included contextually appropriate labels (“Q3 Marketing Campaign” under Projects, “Personal Development” under Areas). The hierarchy flowed logically from top to bottom with proper parent-child relationships.

LSS-BB Assessment: The Firefly native model produced a defect. By any quality standard — first pass yield, right-first-time, fitness for use — the output fails. The Gemini model, accessed through the same interface, same subscription, same credit system, delivered a substantially conforming output. Adobe’s own platform demonstrates, in real time, that its proprietary model cannot match a partner model for structured content generation.

Test 2: Creative Image with Text Rendering

Section titled “Test 2: Creative Image with Text Rendering”Prompt: “A pack of sleek black cats with glowing cyan bioluminescent circuit-board markings” (with text overlay from the “Herding Cats in the AI Age” series branding)

Firefly Image Model 5 Result: Visually striking cyberpunk cats with glowing circuit-board markings, hexagonal network elements, atmospheric depth. Then the text appeared: “BEARETIXSLUGE” across the bottom. Below it: “PA’TEXCACT LEFIMENT.” Complete gibberish. Not a misspelling — a fundamentally broken text generation system that treats letterforms as shapes rather than language.8

The failure has a precise technical cause. Modern image generation systems — Firefly, Stable Diffusion, Imagen — use latent diffusion: a process that progressively denoises a signal in a compressed latent space, guided by CLIP-aligned text embeddings that encode semantic meaning.9 CLIP (Contrastive Language–Image Pretraining) learns to associate images with their descriptions at the level of visual concepts and object categories.10 It does not model character-level structure. Letters emerge from the diffusion process as texture patterns — visually plausible shapes that mimic letterforms — not as sequentially generated glyphs with linguistic constraints. Text rendering is not a compute problem. It is an architectural mismatch between how latent diffusion models process visual patterns and how written language actually works.11

Gemini 2.5 Flash Image (via Firefly) Result: The partner model generated similar cyberpunk cats with circuit-board markings. The text rendered: “HERDING CATS IN AI AGE” — clear, bold, readable, correct. Same prompt. Same platform. Same subscription. Same credit cost.

QASA Assessment: Adobe’s own help documentation confirms this limitation: “Text and symbol generation in images still needs support in the Text to Image feature.”12 Adobe’s community forums go further: “Adobe Firefly (and many other AI models) cannot spell or recognize legible languages yet to render an accurate output.”13 An IEEE Transactions on Visualization and Computer Graphics survey (March 2024) identifies text generation accuracy across all major AI image platforms at below 45% for complex text integration.14 When Google’s Gemini renders text correctly through Adobe’s own platform, the comparison becomes impossible to ignore.

3. THE MIDDLEMAN ARCHITECTURE: AN LSS-BB VALUE STREAM ANALYSIS

Section titled “3. THE MIDDLEMAN ARCHITECTURE: AN LSS-BB VALUE STREAM ANALYSIS”The LSS-BB maps Adobe’s AI value stream as follows:

Pre-Partnership (2023-2024): User → Adobe Firefly Interface → Adobe Firefly Models → Output

Every step in the chain was Adobe. The value proposition was vertical integration: one subscription, one ecosystem, one company responsible for quality.

Post-Partnership (2025-2026): User → Adobe Firefly Interface → Model Selection Dropdown → {Adobe Model OR Google Gemini OR FLUX OR Ideogram OR Runway OR OpenAI GPT Image OR Luma AI OR Pika OR Moonvalley OR ElevenLabs OR Topaz} → Output

Adobe now controls the interface and the billing. The creative engine — the core value generation step — runs on technology Adobe does not own, did not build, and cannot control the development roadmap for.

This architecture has a formal analog in machine learning: the Mixture of Experts (MoE) paradigm.15 In MoE architectures, a routing function — a trainable gate — directs each input to the specialized expert most likely to handle it correctly: G(x) = softmax(W_g · x), where W_g is the learned gating weight matrix and x is the input representation.16 Adobe’s post-partnership architecture is an externalized MoE: the model selection dropdown is the routing layer; Google Gemini, FLUX, Ideogram, and Runway are the expert networks. In canonical MoE implementations, the gate is learned end-to-end with the experts. Adobe’s routing function is not learned. It is the user. Adobe has outsourced the expert weights to partners it does not control, retained the gating function, and handed that gating function to customers who did not sign up to be model engineers.

Waste Identification (DOWNTIME):

Defects: Firefly native models produce gibberish text, distorted faces, and non-functional diagrams. Users must regenerate or switch models.

Overproduction: Adobe ships 10+ partner models. Users report “choice paralysis” trying to determine which model fits which use case.17 The user does the work Adobe’s engineering team did not — matching capability to requirement.

Waiting: Users burn credits on Firefly native outputs, discover the defect, then re-run the same prompt on a partner model. Double the credits. Double the time.

Non-Utilized Talent: Adobe employs thousands of engineers building Firefly models that increasingly get bypassed for partner alternatives.

Transportation: Prompts route from Adobe’s interface to third-party model APIs and back. Latency, dependency risk, and data governance complexity.

Inventory: Adobe accumulates partner model agreements, each with its own terms, pricing, capability lifecycle, and deprecation schedule.

Motion: Users toggle between model dropdowns, compare outputs, and manually evaluate which engine best serves their specific creative need. This is selection waste — the user performs quality assurance that the platform itself should handle.

Extra-Processing: Adobe wraps partner model outputs in Content Credentials metadata, IP indemnification layers, and commercial licensing frameworks. Valuable for enterprise compliance — but it means Adobe’s differentiated value add is increasingly legal/compliance packaging, not creative generation.

4. ADOBE STOCK: THE NUMBERS BEHIND THE NARRATIVE

Section titled “4. ADOBE STOCK: THE NUMBERS BEHIND THE NARRATIVE”Current Position (February 28, 2026)

Section titled “Current Position (February 28, 2026)”| Metric | Value | Source |

|---|---|---|

| Current Price | $257.78 | NASDAQ, Feb 26, 2026 |

| 52-Week High | $464.33 | Feb 18, 2025 |

| 52-Week Low | $251.10 | Feb 12, 2026 |

| YoY Decline | -43% | Benzinga |

| YTD Decline | -23% | Benzinga |

| P/E Context | Trading below 50-day and 200-day MAs | Multiple sources |

| RSI | 26.10 (oversold territory) | CoinCodex |

Revenue Picture

Section titled “Revenue Picture”| Metric | Value |

|---|---|

| FY2025 Revenue | $23.77 billion (+11% YoY) |

| AI-Influenced ARR | $5+ billion |

| Photoshop AI Adoption | 35%+ of subscribers |

| Monthly Active Users | 700 million (+25% YoY) |

| Next Quarter EPS Estimate | $5.86 |

| Next Quarter Revenue Estimate | $6.28 billion |

Analyst Sentiment

Section titled “Analyst Sentiment”| Source | Rating | Price Target |

|---|---|---|

| Wall Street Consensus (21 analysts) | Buy | $409.14 avg (+58% upside) |

| TipRanks (27 analysts) | Moderate Buy | $421.52 avg |

| Benzinga (27 analysts) | Buy | $423.50 avg |

| Target Range | — | $280 (low) to $540 (high) |

Recent Downgrades

Section titled “Recent Downgrades”Piper Sandler downgraded Adobe from Overweight to Neutral. HSBC lowered its price target from $388 to $302. Oppenheimer downgraded from Outperform to Perform. Baird lowered from $410 to $350.18 The downgrades cite increased competition from AI alternatives and concerns about growth sustainability — precisely the dynamic this paper documents.

5. SWOT ASSESSMENT: THE MULTI-DISCIPLINARY VIEW

Section titled “5. SWOT ASSESSMENT: THE MULTI-DISCIPLINARY VIEW”STRENGTHS (What Adobe Controls)

Section titled “STRENGTHS (What Adobe Controls)”Ecosystem Lock-In (LSS-BB): Creative Cloud represents the industry standard for professional creative workflows. Photoshop, Illustrator, Premiere, After Effects, InDesign — decades of muscle memory embedded in millions of users. Switching costs are high. Firefly integration leverages this installed base by making AI features available inside tools users already own.

Commercial Safety Moat (Safety Review): Adobe trains Firefly on licensed and public-domain content. IP indemnification — protecting users from copyright claims — represents a genuine competitive advantage. Enterprise clients care deeply about this. No other major AI image platform offers the same level of commercial safety assurance.

$5 Billion AI ARR (QASA verified): Adobe confirmed over $5 billion in AI-influenced annual recurring revenue at MAX 2025.4 Users are paying for AI features and using them. The revenue stream exists.

700 Million MAU (QASA verified): Adobe’s monthly active user base grew 25% year-over-year to 700 million.19 Any AI improvement immediately reaches a massive audience.

WEAKNESSES (What Adobe Cannot Fix Quickly)

Section titled “WEAKNESSES (What Adobe Cannot Fix Quickly)”Native Model Inferiority (Creative Arts, with evidence): The core finding of this paper. When tested head-to-head against partner models on Adobe’s own platform, Firefly’s native Image Model 5 produces gibberish text, non-functional diagrams, and outputs that Adobe’s own documentation classifies as a known limitation. Text rendering is a fundamental capability for professional creative work — thumbnails, posters, signage, logos, presentations.

Partner Dependency (Supply-Chain Review): Adobe’s best creative AI outputs now depend on Google, Black Forest Labs, Ideogram, OpenAI, Runway, and others. Each partner relationship introduces supply chain risk. Google can restrict Gemini access, change pricing, or build a competing creative platform (which it already has with Gemini’s native image generation). Adobe controls the storefront; partners control the inventory.

The Middleman Problem (LSS-BB): When a company’s value proposition shifts from “we build the best tool” to “we host the best tools,” margins compress and differentiation erodes. Adobe’s subscription price stays the same whether the user picks Firefly Image 5 or Gemini Flash. But Adobe pays licensing fees to Google for every Gemini generation. The more users prefer partner models, the more Adobe’s per-generation margin shrinks.

Engineer Morale Risk (OC observation): Adobe employs thousands of engineers building Firefly. When the company publicly integrates 10+ competitor models — implicitly acknowledging that users need alternatives to what Adobe’s team built — the internal signal is corrosive. Retention risk increases in a competitive AI talent market.

OPPORTUNITIES (What Could Go Right)

Section titled “OPPORTUNITIES (What Could Go Right)”Platform Economics (LSS-BB): If Adobe executes the marketplace strategy correctly, it wins regardless of which model generates the output. Apple does not make every iPhone app. Salesforce does not build every AppExchange plugin. Platform companies can extract value from curation, integration, and distribution even when they do not own the underlying technology. Adobe’s Creative OS positioning — one subscription, all models — could become a genuine competitive moat if users value convenience over capability ownership.

Firefly Foundry Enterprise Play: Adobe Firefly Foundry allows enterprise customers to customize Google’s AI models on Vertex AI using proprietary brand data.20 This creates sticky, high-value enterprise relationships where switching costs are massive.

Credit System Monetization (LSS-BB): Adobe’s generative credit system creates a consumption-based revenue stream on top of subscriptions. As users consume more credits experimenting with partner models, Adobe collects revenue on every generation. When metered billing returns after the current promotional period, credit consumption becomes a growth vector.

Native Model Improvement (Creative Arts): Firefly Image Model 5, launched October 2025, introduced native 4-megapixel resolution and prompt-based editing in natural language.21 One breakthrough model release — particularly one that solves text rendering — could eliminate the middleman narrative overnight.

THREATS (What Keeps the CFO Awake)

Section titled “THREATS (What Keeps the CFO Awake)”Canva Direct Competition (OC): Canva integrates AI directly into a creative platform that costs less than Adobe Creative Cloud and targets the exact same “business professional to advanced creator” demographic. Canva’s acquisition of Kaleido AI and integration with Leonardo.ai positions it as a vertically integrated creative AI platform — the model Adobe abandoned.

Google Disintermediation (Supply-Chain Review): Google provides Gemini models to Adobe as a partner. Google also runs Google Workspace, Google Slides, Google Photos, and YouTube — all platforms where AI-generated creative content deploys. Nothing prevents Google from offering Gemini image generation directly to its 3 billion+ Workspace users, cutting Adobe out entirely. Adobe is arming a competitor.

AI Commoditization (LSS-BB): As open-source models (Stable Diffusion, FLUX.2 variants) improve, the premium Adobe charges for hosted access compresses. When a designer can run FLUX locally for free and get better text rendering than Firefly, Adobe’s credit-based business model faces structural pressure.

Stock in Oversold Territory (QASA): RSI at 26.10 signals the stock is technically oversold. This creates short-term bounce potential but masks the structural question: is Adobe’s AI strategy a sustainable competitive advantage or a transitional holding pattern?

Subscription Fatigue and Pricing Pressure: Adobe Creative Cloud All Apps runs $59.99/month ($719.88/year). Firefly Pro adds $9.99/month. For a combined annual cost approaching $840, users expect the native AI tools to work at a competitive level without toggling through 10 partner model options. Community forums document growing frustration.22

6. THE MILITARY LENS: SUPPLY CHAIN VULNERABILITY

Section titled “6. THE MILITARY LENS: SUPPLY CHAIN VULNERABILITY”The Supply-Chain Review applies military supply chain doctrine to Adobe’s partner model architecture.

In military logistics, a formation that depends on a single external source for a critical capability is “combat ineffective without resupply.” If that source is a potential adversary or competitor, the dependency becomes a strategic vulnerability.

Adobe’s partner model architecture mirrors this pattern. Google supplies Gemini models to Adobe. Google also competes with Adobe in enterprise productivity (Workspace vs. Creative Cloud), AI image generation (Imagen vs. Firefly), and developer tools (Vertex AI vs. Adobe Firefly Foundry). Adobe is receiving ammunition from a formation that occupies an adjacent sector and could redraw the boundary lines at any time.

Network analysis adds precision to this vulnerability. In graph theory, betweenness centrality measures how frequently a node lies on the shortest path between other nodes in the network — it quantifies a node’s structural leverage over information and resource flow.23 Google occupies a high-betweenness node in Adobe’s dependency graph: it lies on the shortest paths between Adobe’s enterprise AI capabilities and its customers who require text rendering, photorealistic generation, and video production. A node with high betweenness centrality does not need to launch a frontal assault. It applies flow control — selectively restricting, repricing, or redirecting the paths it mediates.

The METT-TC(IT) analysis from Paper 1 applies directly:

| Variable | Adobe’s Position |

|---|---|

| Mission | Maintain creative software market leadership while transitioning to AI |

| Enemy | Google, Canva, Midjourney, open-source AI models, standalone tools |

| Terrain | Platform marketplace with 10+ partner model dependencies |

| Troops | Strong engineering force building native models that users bypass for partners |

| Time | 12-18 months before next major model release; stock under pressure now |

| Civil | 700M MAU expecting improvement; enterprise clients demanding IP safety |

| Info Tech | Multi-model routing architecture introduces latency, dependency, and data governance complexity |

7. THE LEAN PERSPECTIVE: IS THIS INNOVATION OR MUDA?

Section titled “7. THE LEAN PERSPECTIVE: IS THIS INNOVATION OR MUDA?”The LSS-BB asks a fundamental question: does Adobe’s multi-model marketplace create value or create waste?

In Lean terms, value is defined by the customer. If users value the ability to select from 10+ models inside one interface at one subscription price, the marketplace creates value. If users would prefer one model that works correctly across all dimensions — text, photorealism, composition, video — then the marketplace is a workaround for a deficiency, which Lean classifies as Muda (waste).

Community feedback suggests the latter. Fei Wu’s 2026 field guide for Firefly partner models opens with this observation: “We went from having one option to having ten. And for busy creators and business owners, that created a new problem: Choice Paralysis.”17

The Quality 4.0 framework from Paper 1 identifies this as a classic “over-engineering” anti-pattern. Instead of fixing the root cause (native model limitations), Adobe addressed the symptom (insufficient output quality) by adding complexity (10+ model options). The defect rate for the core product remains unchanged. The user bears the burden of quality assurance by manually selecting which engine to use for each generation.

Lean Six Sigma formalizes total waste burden as:

Total waste = Σ(category_waste_i × frequency_i)

Across the eight DOWNTIME categories, the dominant contributors by frequency are Defects (every text-rendering generation attempt with the native model), Motion (every model dropdown interaction), and Waiting (every credit burned on a defective output before switching). Adobe’s marketplace architecture did not reduce these frequencies — it redistributed them. The user now bears waste that the platform previously absorbed invisibly.

A Black Belt would map this as a special cause variation problem masquerading as common cause. The native model has a systemic capability gap in text rendering — a repeating, assignable defect pattern rooted in the latent diffusion architecture described in Section 2, not random noise. Adding partner models does not reduce variation in the native model — it routes around it.

Process capability is formally expressed as:

Cpk = min[(USL − μ) / (3σ), (μ − LSL) / (3σ)]

where USL and LSL are the specification limits for acceptable output, μ is the process mean, and σ is the process standard deviation. For professional creative work where readable text is the specification, the LSL is “text is legible and correct.” Adobe’s own documentation confirms the native model fails this specification at a rate the IEEE survey places below 45% for complex text integration14 — which means μ falls far below LSL for professional use cases. Cpk is negative. A process with negative Cpk is not just below specification — it is systematically producing the wrong output.

Adobe’s “solution” is to offer users access to partner processes with higher Cpk — but those processes belong to Google, Black Forest Labs, and Ideogram. The native model’s Cpk for text rendering remains unchanged.

8. THE INVESTOR’S QUESTION

Section titled “8. THE INVESTOR’S QUESTION”This paper does not recommend buying or selling Adobe stock. That determination requires individual risk assessment, portfolio context, and financial advisory consultation that exceeds this paper’s scope.

What this paper identifies: a structural tension between Adobe’s growth narrative (AI-powered creative platform) and Adobe’s operational reality (multi-model marketplace increasingly dependent on partner technology for its best outputs).

The analyst consensus — Buy at $409-$423 — prices in Adobe’s ecosystem strength, subscription revenue stability, and AI growth trajectory. It assumes Firefly’s competitive position will improve.

The hands-on evidence in this paper — same prompt, same platform, gibberish vs. readable text — suggests the competitive gap is wider than analyst models capture. Models built on revenue multiples and subscriber counts do not test whether the AI features driving that revenue actually work. This paper tested them.

The 43% stock decline over 12 months suggests some market participants already reached this conclusion. Whether the current $257 represents a floor (oversold bounce) or a waypoint (continued decline as AI competition intensifies) depends on whether Adobe can close the native model gap before the partner model strategy commoditizes into a table-stakes feature that every platform offers.

9. CONNECTIONS TO THE SERIES

Section titled “9. CONNECTIONS TO THE SERIES”This paper extends the Herding Cats framework in a specific direction: what happens when the “cats” you are herding belong to someone else?

Paper 1 established that AI needs doctrine, process discipline, and quality assurance — not more intelligence. Adobe’s Firefly demonstrates this thesis in real time. The native model possesses significant artistic capability. What it lacks is the structured, disciplined execution that turns artistic capability into professional-grade output. Text rendering is not an intelligence problem — it is a process discipline problem. The model treats letters as shapes rather than language. That is a training architecture decision, not a compute limitation.

The mechanism is precise: the self-attention mechanism in vision transformers operates over patches of an image simultaneously — it does not impose sequential left-to-right ordering across positions.24 Language generation works because the autoregressive attention mask enforces that each token attends only to prior tokens, creating the sequential structure that produces coherent text. Vision transformers lack this constraint by design — they are built for spatial, not sequential, understanding. A latent diffusion model guided by a vision encoder will produce letterform-shaped textures that resemble text without generating text. The architecture generates the appearance of language without the structure of language.

Paper 2 argued that the military’s C2 (command and control) frameworks solve coordination problems that civilian AI companies keep rediscovering. Adobe’s multi-model marketplace is an implicit admission that no single model can handle all creative tasks — the same insight the military codified decades ago with combined arms doctrine. But combined arms works because the Army controls all the arms. Adobe’s “combined models” strategy works only as long as partners cooperate.

Paper 3 demonstrated that a single practitioner’s vault became a laboratory for multi-agent coordination. The Firefly comparison test that generated this paper’s evidence ran inside that same vault workflow — Claude coordinated the prompt engineering, browser automation navigated Firefly’s interface, and the results landed in the knowledge management system Paper 3 documents.

Paper 8 later names the broader pattern the Toboggan Doctrine — template-driven channels that make the right decision the default decision, where the agent becomes a factory worker pushing templates around the work area, taking a ride on the reverse-entropy information enricher slide. By that frame, Adobe’s architecture is the anti-toboggan: the “channel” (model dropdown) has no gravity, no default, no institutional memory. The user provides the routing intelligence every time. A factory where each worker must reinvent the assembly line on every shift is not a factory — it is ten thousand artisans sharing a roof.

CONCLUSION: THE MIDDLEMAN’S DILEMMA

Section titled “CONCLUSION: THE MIDDLEMAN’S DILEMMA”Adobe faces a strategic choice that the stock price will ultimately reflect.

Option A: Win the Engine Race. Invest aggressively in native Firefly model development. Close the text rendering gap. Produce a model that eliminates the need for partner alternatives. Expensive, uncertain, and time-constrained by a stock price that punishes quarterly misses.

Option B: Own the Marketplace. Accept that no single model will dominate all creative dimensions. Double down on the platform strategy: best-in-class interface, IP safety, enterprise customization, and model curation. Monetize the platform layer, not the generation layer. The App Store model applied to AI.

Option C: The Current Hybrid. Continue developing native models while expanding the partner ecosystem. Risk: the market may not give Adobe credit for either strategy if neither produces clearly differentiated results.

The Herding Cats metaphor lands precisely here. Adobe’s cats are brilliant, tireless, and fast — but half of them are Google’s cats, a quarter are Black Forest Labs’ cats, and the ones Adobe built cannot read the sign on the barn door.

The market priced in 43% doubt over the past year. Whether that doubt is excessive or insufficient depends on whether Adobe can teach its own cats to spell.

Field Test Gallery

Section titled “Field Test Gallery”The four figures below were captured February 28, 2026 during live testing conducted through Adobe Firefly’s interface. Test conditions: identical prompts submitted through the same Adobe Firefly account, same subscription, same credit system. Only variable changed was the model selection dropdown.

Test Set 1 — PARA Architecture Wireframe

Section titled “Test Set 1 — PARA Architecture Wireframe”Prompt: “A wireframe architecture diagram of a PARA method knowledge vault (Projects, Areas, Resources, Archives) in Dracula theme colors, hierarchical structure with labeled sub-items”

Figure 4-1: Adobe Firefly native wireframe attempt. Dark-themed visualization with folder icons and connecting lines. Top-level labels partially readable. Sub-labels: gibberish (“b8524,” “20978”). No functional hierarchy. No logical data flow. Result: concept art, not a diagram.

Figure 4-1: Adobe Firefly native wireframe attempt. Dark-themed visualization with folder icons and connecting lines. Top-level labels partially readable. Sub-labels: gibberish (“b8524,” “20978”). No functional hierarchy. No logical data flow. Result: concept art, not a diagram.

Figure 4-2: Gemini result. Structured hierarchical diagram. All four PARA categories labeled. Sub-items contextually appropriate (“Q3 Marketing Campaign” under Projects, “Personal Development” under Areas). Logical parent-child hierarchy. Result: usable diagram.

Figure 4-2: Gemini result. Structured hierarchical diagram. All four PARA categories labeled. Sub-items contextually appropriate (“Q3 Marketing Campaign” under Projects, “Personal Development” under Areas). Logical parent-child hierarchy. Result: usable diagram.

Test Set 2 — Cyberpunk Cats Text Rendering

Section titled “Test Set 2 — Cyberpunk Cats Text Rendering”Prompt: “A pack of sleek black cats with glowing cyan bioluminescent circuit-board markings, hexagonal network elements, atmospheric depth, with text overlay: HERDING CATS IN AI AGE”

Figure 4-3: Adobe Firefly native result. Artistic quality: high. Text rendered: “BEARETIXSLUGE” / “PA’TEXCACT LEFIMENT” — complete gibberish. Mission-critical text rendering: FAIL.

Figure 4-3: Adobe Firefly native result. Artistic quality: high. Text rendered: “BEARETIXSLUGE” / “PA’TEXCACT LEFIMENT” — complete gibberish. Mission-critical text rendering: FAIL.

Figure 4-4: Gemini result through the same Adobe platform, same subscription, same credits. Text rendered: “HERDING CATS IN AI AGE” — clear, bold, correctly spelled. Mission-critical text rendering: PASS.

Figure 4-4: Gemini result through the same Adobe platform, same subscription, same credits. Text rendered: “HERDING CATS IN AI AGE” — clear, bold, correctly spelled. Mission-critical text rendering: PASS.

Test verdict: Same platform. Same subscription. Same prompt. Google’s model renders readable text; Adobe’s own model produces nonsense letterforms. In Lean terms: Adobe outsourced its core value proposition to a competitor, and that competitor’s output is empirically superior — through Adobe’s own interface.

FOOTNOTES

Section titled “FOOTNOTES”DISCLAIMER

Section titled “DISCLAIMER”This paper constitutes independent research and analysis. It does not constitute financial advice, investment recommendations, or solicitations to buy or sell securities. The author holds no position in Adobe (ADBE) stock. All stock data reflects publicly available information as of February 28, 2026. Readers making investment decisions based on any information in this paper do so at their own risk and should consult a qualified financial advisor.

© 2026 Jeep Marshall. Part of the “Herding Cats in the AI Age” research series.

Canonical source: herding-cats.ai/papers/paper-4-creative-middleman/ · Series tag: HCAI-d91ceb-P4

Series Navigation

Section titled “Series Navigation”| This paper | Paper 4 of 10 |

| Previous | ← Paper 3: The PARA Experiment |

| Next | Paper 5: When the Cats Talk to Each Other → |

Related

Section titled “Related”- Index - Published — parent folder

Footnotes

Section titled “Footnotes”-

Benzinga, “Adobe (ADBE) Stock Price Prediction 2026, 2027 & 2030,” February 2026. Adobe declined 43% over the past year and 23% year-to-date. ↩

-

StockAnalysis, “Adobe (ADBE) Stock Forecast & Analyst Price Targets,” February 2026. 21 analysts, consensus Buy, avg target $409.14. ↩

-

Adobe MAX 2025 keynote, October 28, 2025; confirmed by Benzinga reporting. ↩

-

Fortune, “Adobe deepens Google Cloud partnership to advance AI,” October 30, 2025. Adobe EVP David Durn confirmed $5B+ AI-influenced ARR. ↩ ↩2

-

Adobe/Google Cloud joint press release, “Expand Strategic Partnership to Advance the Future of Creative AI,” October 28, 2025. ↩

-

Adobe, “Partner models in Adobe products,” helpx.adobe.com, updated February 2026. Lists all current partner model integrations. ↩

-

Adobe, “Firefly partner models expand your creative power,” adobe.com/products/firefly/partner-models.html. ↩

-

Adobe Community Forums and allaboutai.com independent testing confirm: “Adobe Firefly (and many other AI models) cannot spell or recognize legible languages yet to render an accurate output.” The model treats text as shapes and textures, not as letters with meaning. ↩

-

Ho, J., Jain, A., & Abbeel, P. (2020). “Denoising Diffusion Probabilistic Models.” Advances in Neural Information Processing Systems (NeurIPS), 33. arXiv:2006.11239. Establishes the DDPM framework on which Stable Diffusion, Imagen, and Firefly’s architecture are built. ↩

-

Radford, A., Kim, J.W., Hallacy, C., et al. (2021). “Learning Transferable Visual Models From Natural Language Supervision.” International Conference on Machine Learning (ICML). OpenAI. Introduces CLIP — the contrastive image-text embedding model that guides latent diffusion generation. CLIP encodes semantic similarity at the concept level; it does not encode character-level sequential structure. ↩

-

The sequential-glyph problem in diffusion models is distinct from the text-to-image quality problem. Vision transformers (Dosovitskiy et al., 2020) process image patches in parallel using position embeddings — there is no causal mask enforcing sequential generation. Character sequences require ordered generation; diffusion processes do not impose ordering. This is the architectural source of Firefly’s text rendering failure. ↩

-

Adobe, “Known limitations in Firefly,” helpx.adobe.com, December 2025. Direct quote: “text and symbol generation in images still needs support.” ↩

-

Adobe Community Forums, confirmed by allaboutai.com comprehensive Firefly 5 review, December 2025. ↩

-

IEEE Transactions on Visualization and Computer Graphics, March 2024, survey on text generation accuracy in AI image platforms. ↩ ↩2

-

Shazeer, N., Mirhoseini, A., Maziarz, K., et al. (2017). “Outrageously Large Neural Networks: The Sparsely-Gated Mixture-of-Experts Layer.” International Conference on Learning Representations (ICLR). arXiv:1701.06538. Canonical MoE reference. Introduces the gating function G(x) = softmax(W_g · x) with learned routing for sparse expert activation. ↩

-

Jacobs, R.A., Jordan, M.I., Nowlan, S.J., & Hinton, G.E. (1991). “Adaptive Mixtures of Local Experts.” Neural Computation, 3(1), 79–87. Original mixture of experts framework establishing the principle of learned routing between specialized subnetworks. ↩

-

Fei Wu, “Adobe Firefly Partner Models: The 2026 Field Guide,” feisworld.com, February 2026. ↩ ↩2

-

TipRanks, Adobe analyst rating downgrades compilation, February 2026. HSBC ($388→$302), Piper Sandler (Overweight→Neutral), Oppenheimer (Outperform→Perform), Baird ($410→$350). ↩

-

Fortune, October 2025. Adobe EVP David Durn: “more than 700 million monthly active users, up 25% year-over-year.” ↩

-

Adobe/Google Cloud partnership details. Enterprise customers use Google’s AI models on Vertex AI with proprietary data through Firefly Foundry. ↩

-

Adobe MAX 2025 Firefly announcement. Image Model 5: native 4MP resolution, layered editing, prompt-based editing in natural language. ↩

-

Adobe Community Forums, multiple threads: “Firefly is terrible” (Nov 2024, ongoing), “Firefly has become worst” (May 2025), “Feedback on Adobe Firefly Image Generation Quality” (Oct 2025). Representative user: “Firefly is still completely unusable for any serious work.” ↩

-

Betweenness centrality: B(v) = Σ_{s≠v≠t} [σ(s,t|v) / σ(s,t)], where σ(s,t) is the total number of shortest paths from node s to node t, and σ(s,t|v) is the number of those paths passing through node v. High-betweenness nodes exercise structural leverage without requiring direct adversarial action — they simply control the paths. Freeman, L.C. (1977). “A Set of Measures of Centrality Based on Betweenness.” Sociometry, 40(1), 35–41. ↩

-

Dosovitskiy, A., Beyer, L., Kolesnikov, A., et al. (2020). “An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale.” International Conference on Learning Representations (ICLR 2021). arXiv:2010.11929. Vision Transformer (ViT) processes non-overlapping image patches with bidirectional self-attention — no causal mask, no sequential ordering constraint. This is the architectural basis for the text-rendering limitation described in Section 2. ↩